The Land and the Edge: Checkpointing after a year of Claude Code

Claude Code turned one recently. I've been using it for much of its existence and the model API for a few years before that. This felt like a good time to write down some reflections on the model, the harnesses around it, and what all of this means for someone who spends their days doing research. Much of this comes from conversations with colleagues who are navigating the same shift. The consensus, if you can call it that, is somewhere between exhilarated and existential.

The end of the beginning

Let me start with a specific moment that changed how I think about LLMs.

Sometime in early 2024, I was in a meeting with folks across research and product. The topic: something about onboarding and scaling a particular pipeline? Several ideas were being thrown around workflows, graph-based flows, offline optimization. I have been playing with Sonnet 3 for a while and thought about generating the flow graph and optimizing it with its own feedback based on evaluations (what would be called ralph-loop today). Everyone agreed it was a good idea and the discussion continued about how to scope this, resource it, get it done. I opened my laptop, wrote this as a system prompt on the console and within few minutes I was demoing a working proof of concept.

This was an oh shit moment. Not because the idea was particularly novel, it wasn't. But because my researcher instincts had been screaming the usual warnings: the model might not have the right inductive bias, we need to think about failure modes, let's design an experiment first. The research hat was firmly on. Meanwhile, Sonnet 3 just... did the thing.

From there I went on a build streak in my spare time. Several projects that would have taken weeks to even scope in a traditional research setting. The insight was simple and, in retrospect, obvious: if you can abstract something to code or tokens that the model can take as input and produce as output, then it's game on. The barrier isn't the model's capability; it's our ability to frame the problem in a language the model speaks. This mirrors the tokenization principle we see across modalities: reduce it to a sequence, and we can train a model, reinforce it, and use it.

On Boundaries and the Inertia

Years later, anthropic models are still my go-to for most of my tasks, be it coding with cursor or API use. This is mostly because I know the boundaries. I understand where the model would go wrong, where it would excel, and how to prompt it to get what I need. This is what we call taste, another vibe term that has been going around lately.

This is worth pausing on, because there's a persistent discourse about which model is the best. One group champions one model. Another group swears by a competitor. I've come to believe much of this comes down to familiarity.

| Familiar Model | New Model | |

|---|---|---|

| Boundaries | Known and internalized | Unknown, discovered through failure |

| Prompting style | Adapted over time | Requires recalibration |

| Trust level | Calibrated | Over- or under-trusted |

| Productivity | Optimized | Startup cost |

Models are constantly evolving. The model you dismiss today based on a bad experience might have been fine or even better, you just didn't have the muscle memory for its quirks. The switch cost isn't just about learning new APIs or UIs. It's about re-learning the boundaries of a system. And that only happens through sustained use, not through reading benchmark tables. I'm not saying don't switch. I'm saying recognize the cost and understand that your assessment of a model is deeply entangled with your history of using it.

Research: The Cliff

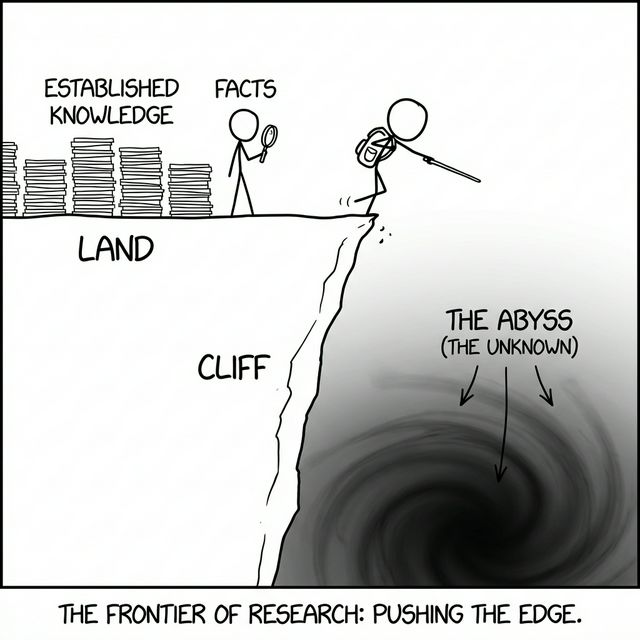

Here's a framing I keep coming back to in conversations about research:

Research is walking along the edge of a cliff. Walk too far inward towards the land and you end up doing work that's nice but not impactful. Fall off the other side and you're in the abyss-nothing works, nothing matters, and the ever lasting depression of why does anything matter is what it is.

The metaphor is useful because it separates three zones:

- The land: established knowledge, known methods, solved problems. Safe. Productive. Not where breakthroughs happen.

- The edge: the frontier, where open questions live, where the risk-reward ratio is highest. This is where research actually happens.

- The abyss: the unknown that is too unknown-problems we don't have the tools or frameworks to even formalize yet.

The Land, The Edge, and The Abyss

Standing on the Land

I've been using LLMs and agents for everything: brainstorming research findings, writing papers, running analyses, iterating on experimental results. The workflow that has surprised me the most: set up a VM, point Claude Code at a repository with some skills/markdown instructions and a dataset, dangerously skip permissions, and let it go. Run experiments. Analyze results. Iterate. Write it all up to markdown or LaTeX.

Research ideas that I have the foundations for, I find models today are able to critic it, find similarities, and apply it onto a different dataset, a different domain, a different configuration. These used to take weeks. With the right context in place, Claude Code (in my experience) handles these in a fundamentally different timeframe.

The key word there is context. This connects directly to prompting strategies: descriptive context constrains the model's output space and leads to more precise results. When I give Claude Code a well structured repository with a new algorithm, methods, and prior results, I'm essentially providing the descriptive prompt that enables high-quality autonomous work.

As a researcher, I've grown more confident about letting it work in parallel while I focus on the next experiment.

Walking on the Edge

Models today are excellent at exploiting existing context. But the research ideas themselves, from scratch without rich prior context, are not yet there.

This isn't a criticism. It's a characterization of where the model sits on the cliff. They can be left to operate confidently on the land. They can walk right up to the edge. But when they need to take a leap, they collapse.

The automation of research is a path in the land. AI can produce discoveries, extensions, and optimizations. That's valuable and in fact can be revealing of the landscape. But the cliff edge is still walked by humans, at least for now.

What is the Right Question to Ask?

This reframes the problem. It's not "will AI replace researchers?" It's "what is the right question to ask AI?"

Research has always been about exploration of the boundaries. The question that sits precisely at the cliff's edge, not too safe or too reckless, is the hard part. It always has been. The difference now is that once you have the question and some initial signal, the execution on the land is increasingly handled by the tools.

| Zone | What AI Does Well | What Researchers Do |

|---|---|---|

| The Land | Extend, replicate, automate, evaluate | Provide context and validate |

| The Edge | Brainstorm, suggest adjacent ideas | Identify the right questions, judge novelty |

| The Abyss | Not much (yet) | Occasionally fall in and come back with something |

The table will shift. Models will get better at operating on the edge. But the asymmetry today is clear: AI is strongest where the ground is firmest.

Looking Ahead

A year into Claude Code, I find myself in a place I didn't expect: more productive, more trusting of the models and harness, but also more convinced that the hard part of research was never the execution. It was always the question. The land will keep expanding. The tools will keep getting better. The models of the future will push further toward the edge. But for now, the most valuable thing a researcher can do is something no model does well yet: decide where to walk.

This post reflects personal observations over the recent years. I use models, LLMs, agents, and AI interchangeably in this post. Written with Claude Code.